by Rémi Coulom

Artificial intelligence in games often leads to the problem of parameter tuning. Some heuristics may have coefficients, and they should be tuned to maximize the win rate of the program. A possible approach is to build local quadratic models of the win rate as a function of program parameters. Many local regression algorithms have already been proposed for this task, but they are usually not robust enough to deal automatically and efficiently with very noisy outputs and non-negative Hessians. The CLOP principle, which stands for Confident Local OPtimization, is a new approach to local regression that overcomes all these problems in a simple and efficient way. CLOP discards samples whose estimated value is confidently inferior to the mean of all samples. Experiments demonstrate that, when the function to be optimized is smooth, this method outperforms all other tested algorithms.

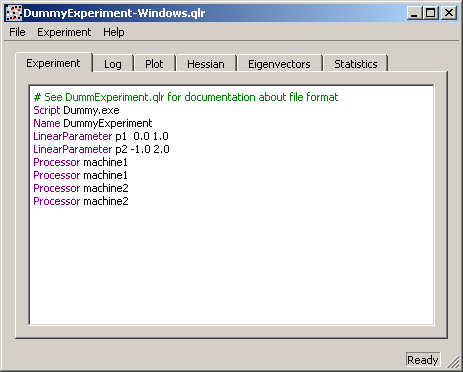

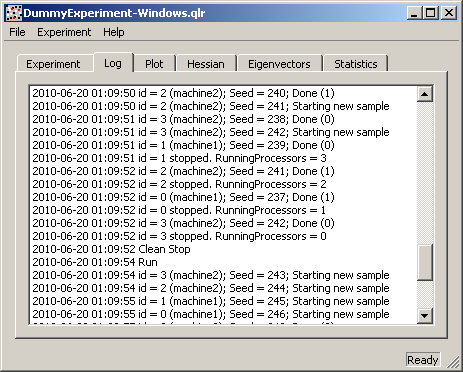

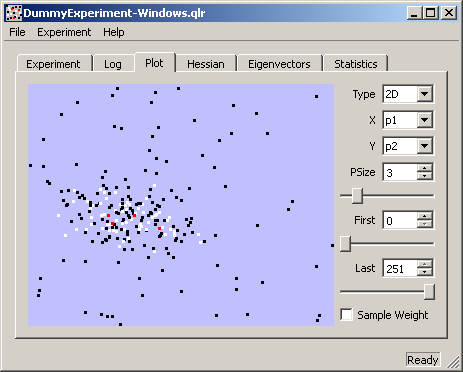

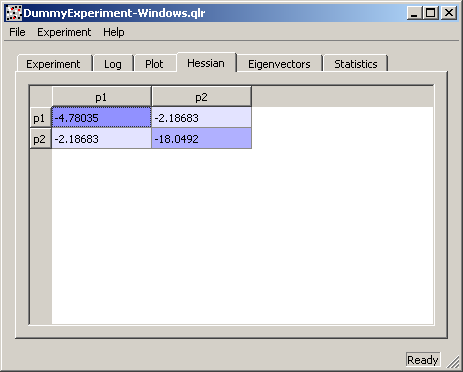

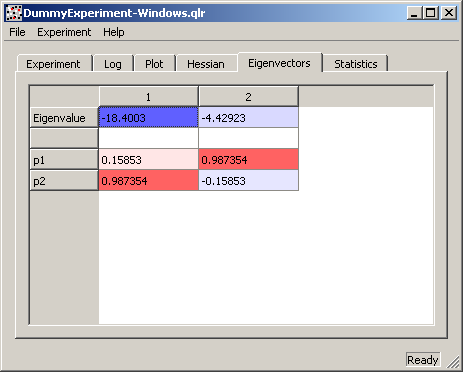

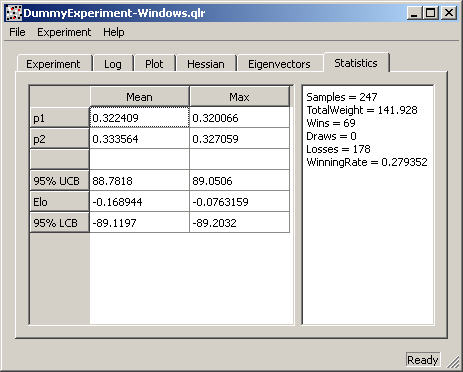

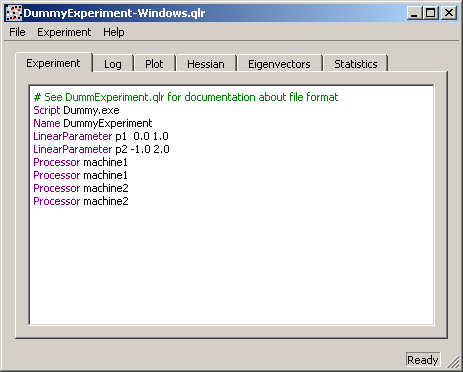

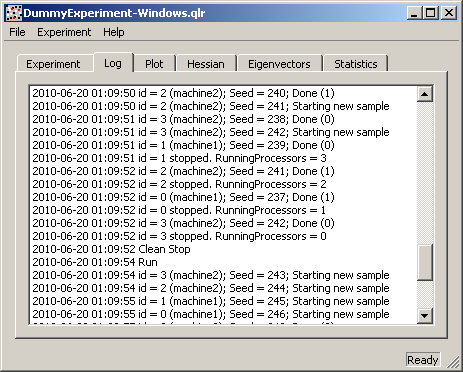

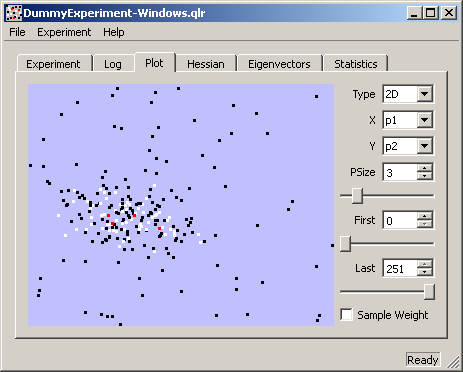

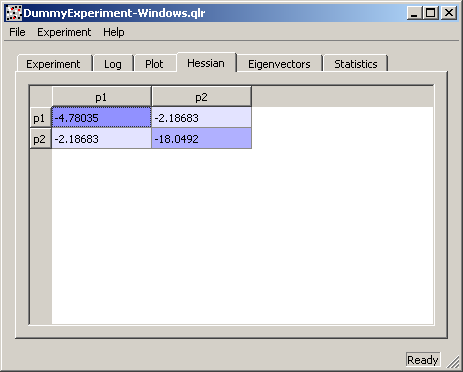

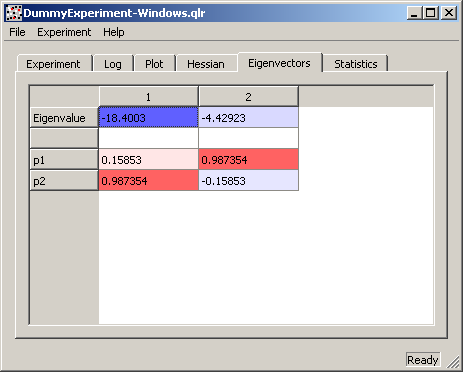

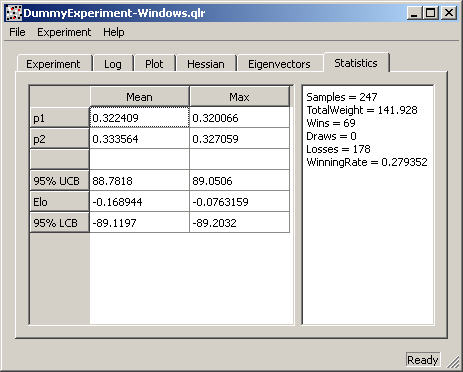

These are some screenshots of an old version in action. You can also run the program from the command line, which is more convenient for use on a remote cluster. The program can deal with chess outcomes (win/draw/loss), and integer parameters. The program is written in C++ with Qt, so it can be compiled and run on Windows, Linux, and MacOS.

|

|

|

|

|

|